What is the problem?

The black box phenomenon

Internet platforms, such as Facebook, Twitter, YouTube, WeChat, and TikTok, enable exciting opportunities for expression, but can also be sites of online harms, such as the posting of child abuse imagery and terrorist propaganda, the spread of hate speech and disinformation, and the facilitation of bullying and abusive activity. To counter such online harms, platforms identify problematic content or behaviour and respond by deleting or restricting it, in a process known as content moderation. Alongside human reviewers called "content moderators", platforms use automation and AI to identify and respond to problematic content and behaviour. The benefit of algorithmic content moderation (ACM) is that it is a fast and globally scalable way to prevent offensive content being uploaded and travelling across the globe within seconds. It can also spare human content moderators some of the tedium of a very repetitive job, as well as the trauma of viewing the most distressing content, such as child abuse imagery.

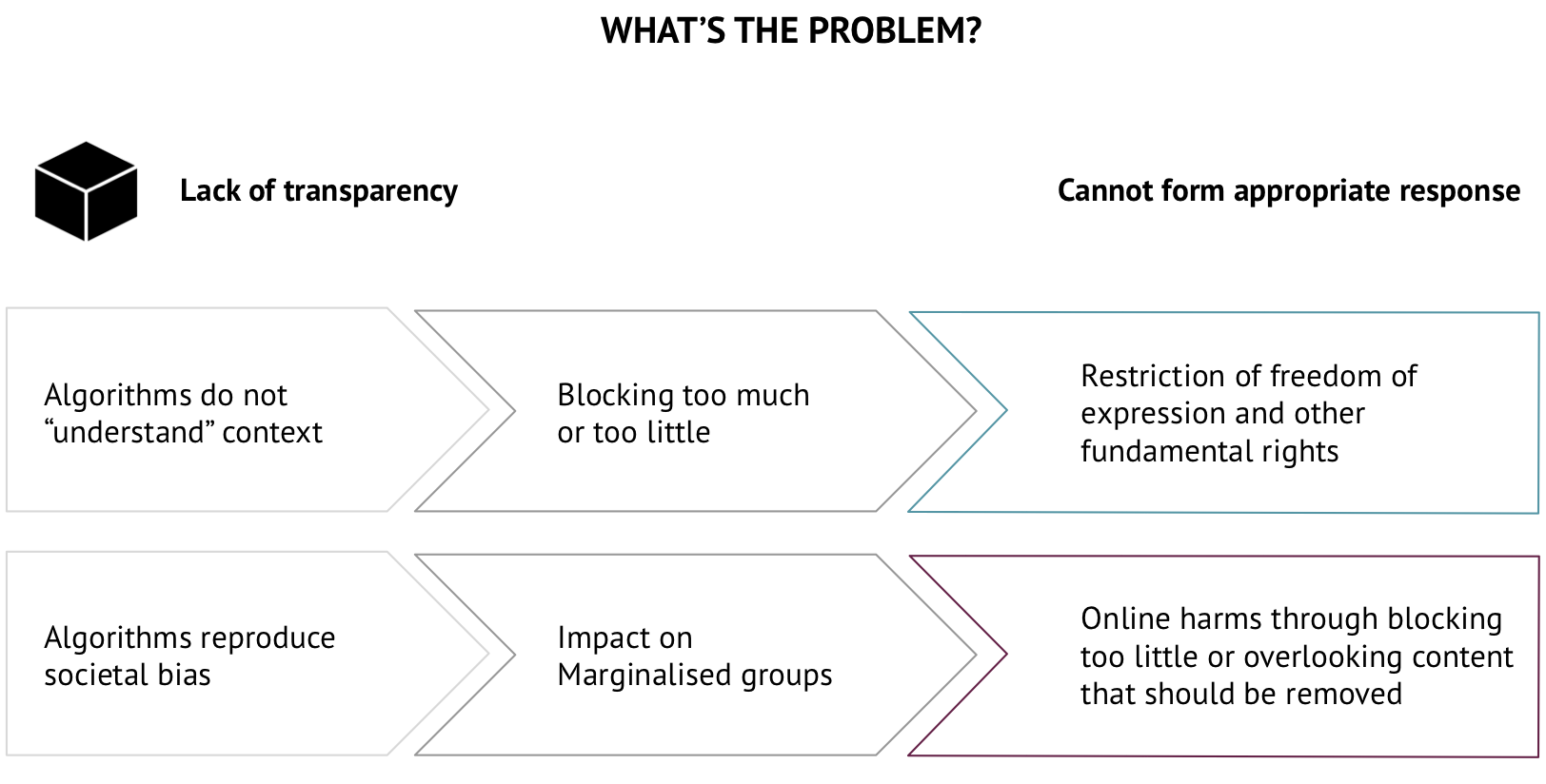

ACM, however, comes with its own risks. It does not understand context and can either block too much content or too little. "Overblocking" restricts free expression and creativity on the Internet, and can unfairly punish marginalised groups (for example, by misinterpreting certain dialects or vernacular as hate speech). Alternatively, ACM can miss abuses that would otherwise be picked up by human reviewers. Although content moderation algorithms play a central role in shaping public discourse and have a concrete impact in the “real” world, platforms release very limited information about them to the public and governments.

A lack of transparency prevents public oversight and obstructs regulators from formulating appropriate responses that would protect individual rights and public interests. Furthermore, a lack of transparency produces elevated expectations about the capabilities of algorithms as a "magic wand" for content moderation, while misleading regulators about what kinds of measures can be technically implemented, and which not. We should not expect more transparency to be a “magic wand” in itself, but it is an important first step towards appropriate approaches for future regulation and accountability mechanisms. Major platforms are planning to use more automation and AI in the future, so this issue will become ever more important.

What do platforms tell us?

Platforms’ disclosure of algorithmic content moderation practices

Platforms use a range of different technologies in content moderation, from simple bots that delete content with certain phrases to complex ML algorithms that teach themselves which characteristics to look for when scanning content. Platforms are particularly open about the fact that they use matching technology to block content, such as child abuse imagery and terrorist propaganda. However, they are less open about how they automatically identify and remove other kinds of content which are potentially harmful, but are not illegal.

Matching technologies aim to detect files that are the same as a file that has already been uploaded.

Hashing is a technology used to produce a fingerprint (known as a hash) of a multimedia file, which is then matched against a collection of hashes in a database. For example, there are databases of hashed images that have already been identified as child sexual abuse imagery. Images uploaded on platforms can be compared to hashes in this database. If an abusive image is re-uploaded on social media, it can be automatically detected, without a human having to review it.

Classification technologies aim to find new violations in uploaded content by looking for patterns, e.g. in text or images. Based on pattern identification, the algorithms classify the content into predefined categories, such as nudity/not nudity. This can involve a range of different technologies, such as rules-based AI or ML. For example, platforms can look for hate speech by identifying certain keywords. In a further example, algorithms look for patterns in shapes and colours in an image that indicate that it could contain nudity.

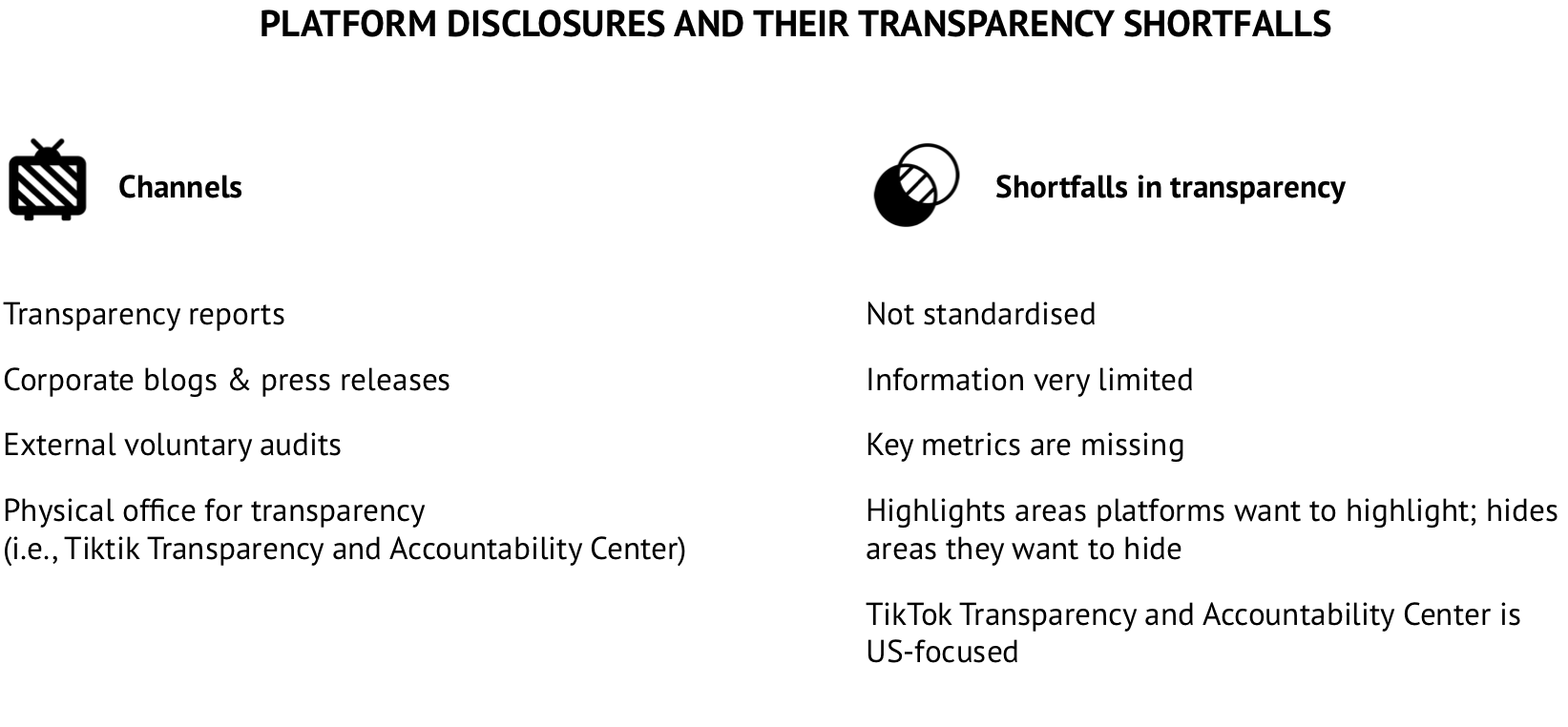

Platforms publish limited information about the use of ACM on an ad hoc basis, primarily through quarterly or biannual transparency reports about content moderation as a whole. The quality of information contained in these reports varies greatly, especially when it comes to the use of automation and AI. For example, the YouTube transparency report publishes the number of videos that are automatically taken down without human review, while the Twitter transparency report only contains overall numbers for deleted posts without differentiating between automation and human reviews (see Appendix 3 for more about the information contained in transparency reports). Platforms also publish ad hoc information on their corporate blogs and press releases, but this information is often superficial and difficult to find. Another means is through audit reports by external experts that have been commissioned by the platform, such as the Facebook Data Transparency Advisory Group. However, these reports do not give much detailed information and are intended to give recommendations to the platforms, and not to regulators. This way of sharing information does not fit the needs of the general public or regulators.

In 2020, TikTok opened a Transparency Center at their headquarters in Los Angeles, USA. The opening has been delayed because of the COVID-19 crisis, so they are offering virtual tours to journalists and experts, including a look at "[their] safety classifiers and deep learning models that work to proactively identify harmful content and [their] decision engine that ranks potentially violating content to help moderation teams review the most urgent things first". This is a step in the right direction. It seems, however, that it will not be open to the public, and TikTok will decide which information it will share, not regulators. It also seems to be focused on the US market, so it might not give insight into content moderation in other parts of the world.

A significant barrier to transparency is that the information contained in transparency reports (or indeed other information channels) is not standardised across platforms. Platforms have not yet provided direct information about how accurate algorithms are, and very seldom publish how much content is deleted automatically without human review. It is therefore difficult for regulators and the public to understand how much content is being erroneously deleted or being deleted without human intervention. Often, facts are hidden behind obscure metrics, such as Facebook’s "proactive rate", which describes the percentage of content actioned that was discovered before it was reported by a user. This could mean the percentage of content actioned that was identified by algorithms, but it could also include other platform initiatives to find content, so it is not clear.

Regulators should be aware that platforms use transparency reports to draw attention towards certain topics and away from others. For example, by disclosing numbers of government information and takedown requests, platforms draw attention to the problem of government surveillance and censorship. This issue is deserving of attention, but at the same time, it also draws attention away from the role of platform companies in online speech, including the influence of proprietary content moderation technologies.

Legislative landscape

Shortfalls of current legislation in ensuring meaningful transparency about the use of algorithmic content moderation systems

Most Internet and platform regulations currently in force do not include provisions that guarantee effective transparency and accountability from digital platforms in the use of their content moderation systems (human or automated). This is particularly true of laws that provide for limitations on the liability of digital platforms for third-party content. Much of this legislation was introduced around 20 years ago (e.g. US CDA 230, and EU E-Commerce Directive), when the use of automated content moderation was still in its infancy. Nonetheless, even more recent laws (e.g. the Brazilian Civil Rights Framework for the Internet) do not directly address the growing importance of new technologies employed by platforms for identifying and filtering illegal content, such as AI.

A great part of the national AI strategies that have been recently adopted or are currently under discussion in different countries highlights the importance of ethics of AI, including the importance of adopting legal frameworks that ensure that AI applications can be transparent, predictable, and verifiable. However, the existing legislation on platform regulation, as a rule, still does not take into account the risks involved in the use of AI systems for public discourse, freedom of speech, and other fundamental rights. It also treats content moderation by human and automated systems as a single entity, without differentiating them. As a consequence, although some of them provide for general transparency requirements, such as the obligation to publish transparency reports, they fall short in not providing for explicitly mandatory transparency mechanisms in the use of ACM systems, as we can see from some recent national laws and bills that introduce regulations on the topic (see Appendix 1).

In this context, the EU General Data Protection Regulation (GDPR) has already signaled an important change in the thinking around the regulation of automated systems. The GDPR requires the "data controller" to inform “data subjects” when they have been subject to fully automated individual decision-making, which is possible only in the specific cases provided for in article 22. It also introduces mechanisms that allow them to request human review or challenge the decision. Alongside the right to know how platforms handle their personal data, users should also have the right to know how platforms handle their content, especially if they remove it. Now, with the Digital Services Act (DSA), currently under discussion in the European Commission, European regulators seem to be looking for solutions to ensure more transparency and accountability in the use of ACM systems by platforms and set the standards for other countries, as they did with the GDPR.

Policy recommendations

How to improve the transparency and accountability of algorithmic content moderation systems

It is important to make clear that regulating ACM does not mean legally requiring the use of automated systems. The idea here is that regulators should be aware that algorithmic tools, although necessary, have substantive flaws and often make mistakes, and that platforms, despite their transparency efforts, are not clear about that. Therefore, it is necessary to implement rules that guarantee more transparency in platforms’ decision-making process in content moderation, so it can be subjected to public argumentation and contestation. Moreover, governments should have access to this information, so they can make informed decisions in their regulatory responses to the problems that might emerge in the process. For that, specific and binding disclosure rules should be the first step for more transparency.

This does not mean, however, that all data to be disclosed by platforms should necessarily be provided for in law, especially in light of the technical aspects involved in the use of algorithmic systems and their rapid technological development. While lawmakers should work on provisions that clearly define platforms’ transparency obligations and explicitly enforce data disclosure on the use of these systems, with legal safeguards against the undermining of privacy, freedom of speech, and other fundamental rights, they should also leave room for further regulation by the relevant public authority with appropriate technical and policy expertise.

This public authority could be an existing authority, preferably an independent public authority with key transparency and due processes obligations, that would absorb these new competences related to the implementation of binding disclosure rules. However, it should work in a multi-stakeholder arrangement, with a committee, panel, or group composed of interested parties, which would be responsible for providing assistance and advice in the formulation of common technical standards (e.g. metrics) and statutory instruments that clearly establish how and what kind of data should be disclosed by platforms. This would guarantee more flexibility and technical expertise in the implementation of disclosure rules. That is why a legal requirement for the implementation of content moderation appeal systems by platforms and the establishment of a regulatory regime that involves a multi-stakeholder approach in the rulemaking process are also recommended in this policy brief. The increasing deployment of ACM should be accompanied by governmental oversight, accountability, and appeal mechanisms.

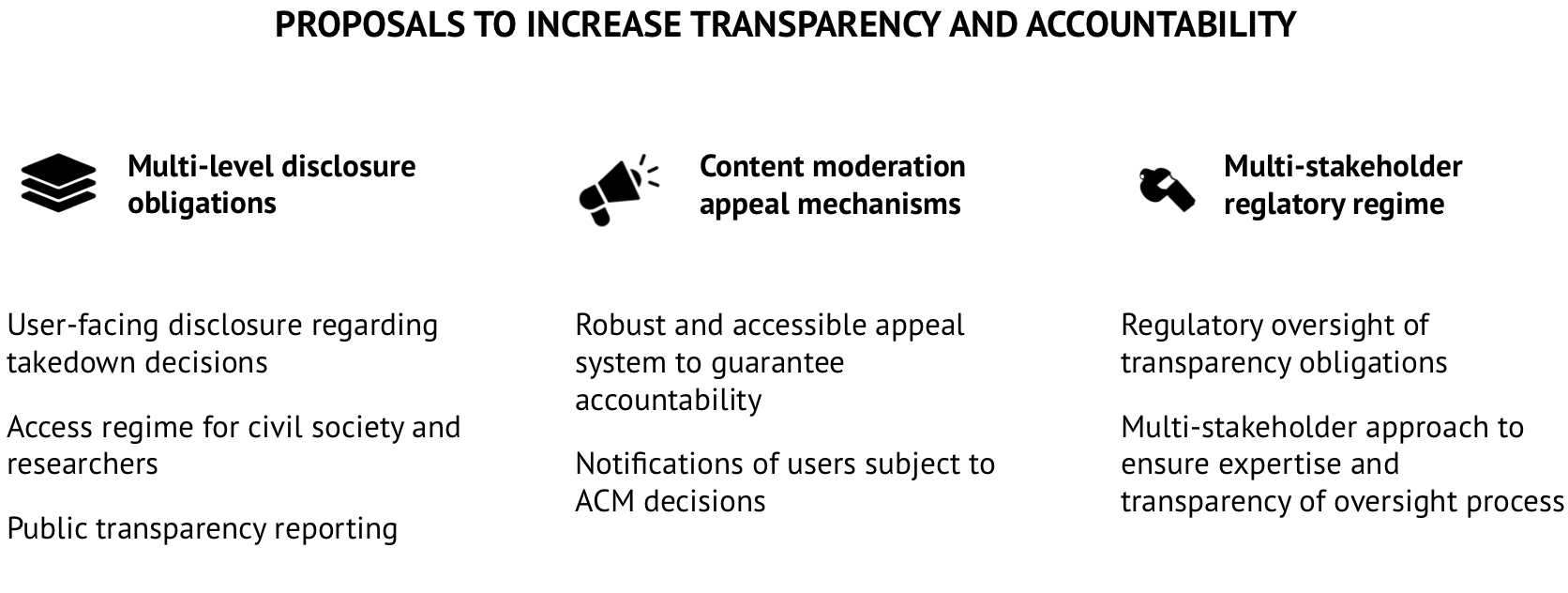

Adoption of multi-level disclosure rules

The adoption of multi-level specific and binding disclosure rules is an important mechanism for increasing transparency, without excessively intervening in platforms’ business models. Mandatory transparency reports with only general provisions on what should be disclosed have proven insufficient. Without specific and binding disclosure obligations, platforms decide what data and information to disclose and how they will disclose them, which, in many ways, obstruct independent studies and external oversight. The option to provide for disclosure obligations on multiple levels allows governments to share oversight responsibilities with different actors (e.g. civil society, academia, and users), opting for a multi-level accountability regime. Independent researchers should be able to have access to data that allow them to audit algorithms and undertake impact assessments of these algorithms, e.g. for public discourse and freedom of speech. Suggestions for multi-level disclosure:

User-facing disclosure: In addition to notifying the user of a takedown and offering the option to appeal, platforms should be required to notify users if the takedown is a result of an automated decision without human review.

Civil society and research disclosure: Platforms should be required to allow researchers access to algorithmic tools for the purposes of algorithmic auditing and archived databases of deleted content, along with records of their efforts to tackle that content. This should be available for most categories of account, such as copyright infringement, hate speech, and disinformation. For certain categories, such as child sexual abuse imagery and image-based abuse ("revenge porn"), there are ethical arguments against providing access. Such considerations could be negotiated in a co-regulatory structure.

General disclosure: Transparency standards should be imposed on large commercial platforms requiring information to be disclosed in a standardised form, while also allowing for differences between platforms (e.g. differences in community guidelines, or what counts as a violation). This includes provisions that oblige platforms to incorporate in their transparency reports particular information, such as:

- Estimated accuracy rates for flagging and how accuracy is defined.

- As content moderation is geographically patchy across the globe, platforms should also be required to disclose roll-outs of new AI systems (e.g. the AI system being rolled out in the jurisdiction and for which kinds of violations, e.g. new roll-out of AI against hate speech in a particular country).

Implementing content moderation appeal systems

The implementation of robust and accessible content moderation appeal systems is considered an essential mechanism to guarantee more accountability from platforms. It is in line with users’ right to information, particularly to be notified when they are subject to automated decisions without human review, since they should also have the right, for example, to appeal against any decision they consider wrong. ACM without human review entails the risk of content being wrongly removed, and as we have seen during COVID-19 crisis, its use is on the increase. Appeal mechanisms have become even more necessary to guarantee the rapid reinstatement of any legitimate content or account that was wrongly removed, mitigating potential harmful effects for public discourse, freedom of speech, and other users rights.

Establishing a multi-stakeholder-based regulatory regime

The establishment of a regulatory regime that involves a multi-stakeholder approach in the rulemaking process is also a key mechanism to guarantee more effectiveness and efficiency in the implementation, regulation, monitoring, and enforcement of disclosure rules. The relevant public authority should include in its decision-making process a multi-stakeholder group, with representatives of interested parties, such as platforms, independent researchers, users, and civil society organizations. This multi-stakeholder group should provide assistance and advice to the public authority in drafting common disclosure standards and rules. It could also assist the public authority in monitoring whether platforms are complying with their disclosure obligations in a timely, accurate, and complete manner. It could, for example, advise on cases involving non-compliance complaints against platforms and the application of sanctions in case of non-compliance. There is not one single model for the adoption of a multi-stakeholder approach. This multi-stakeholder group could be part of the public authority structure or an external advisory group, depending on the existing infrastructure or the preferences of the country or region in question. Governments have already adopted this kind of structure for other regulated sectors, so they can look at them as a reference.

There are some other suggestions that also propose a multi-stakeholder approach, particularly from civil society groups. One example is the creation of an independent, accountable, and transparent multi-stakeholder body, which would be responsible for elaborating and implementing the technical and practical remedies necessary to guarantee more transparency and accountability from platforms, as set in the law. In this case, some of the competences of the relevant public authority would be transferred to the independent multi-stakeholder body, which would be monitored by this public authority. This would also be a viable solution and, depending on the public structure and resources available in each country, using existing infrastructure and involving directly existing stakeholders could be an option to reduce some of the costs involved in the implementation of these rules by states.

In order to achieve the expected results with the adoption of these transparency and accountability mechanisms, one of the biggest challenges for governments will be to gather the knowledge and technical expertise necessary to implement them. That is why it is so important to have a multi-stakeholder approach already in the regulatory phase. The option of sharing responsibilities with other stakeholders through a multi-level accountability regime, in our view, is one of the best solutions to tackle platforms’ lack of transparency in the use of ACM systems.