Executive Summary

Results from the Platform://Democracy project

Social media platforms have created private communication orders which they rule through terms of service and algorithmic moderation practices. As their impact on public communication and human rights has grown, different models to increase the role of public interests and values in the design of their rules and their practices have, too. But who should speak for both the users and the public at large? Bodies of experts and/or selected user representatives, usually called Platform Councils or Social Media Councils (SMCs), have gained attention as a potential solution.

Examples of Social Media Councils include Meta’s Oversight Board but most platform companies have so far shied away from installing one. This survey of approaches to increasing the quality of platform decision-making and content governance involving more than 30 researchers from all continents brought together in regional “research clinics” makes clear that trade-offs have to be carefully balanced. The larger the council, the less effective is its decision-making, even if its legitimacy might be increased.

While there is no one-size-fits-all approach, the project demonstrates that procedures matter, that multistakeholderism is a key concept for effective Social Media Councils, and that incorporating technical expertise and promoting inclusivity are important considerations in their design.

As the Digital Services Act becomes effective in 2024, a Social Media Council for Germany’s Digital Services Coordinator (overseeing platforms) can serve as test case and should be closely monitored.

Beyond national councils, there is strong case for a commission focused on ensuring human rights online can be modeled after the Venice Commission and can provide expertise and guidelines on policy questions related to platform governance, particularly those that affect public interests like special treatment for public figures, for mass media and algorithmic diversity. The commission can be staffed by a diverse set of experts from selected organizations and institutions established in the platform governance field.

Ground Rules for Platform Councils

How to Integrate Public Values into Private Orders

Summary

- How to make digital spaces more democratic? Social media platforms have created – in principle – legitimate private communication orders which they rule through terms of service and algorithmic moderation practices. As their impact on public communication and human rights has grown, different models to increase the role of public values in the design of their rules and their practices have, too. But who should speak for both the users and the public at large? Bodies of experts and/or selected user representatives, usually called Platform Councils of Social Media Councils (SMCs) have gained attention as a potential solution. To ensure public values in these hybrid communication spaces, private actors have been asked to legitimize their primacy and incorporate public elements, such as participatory norm-setting processes and ties to human rights. The Oversight Board by Meta represents one of the first attempts to open up the decision-making system of a commercial platform to the “outside”.

- How to design platform councils? The idea behind platform councils is to increase inclusivity in decision-making and communication space-as-product design. However, there is limited evidence on how to best construct a platform council, and the most effective approach seems to be one of regulated self-regulation. This means combining commitments by states to develop a normative framework and engaging platforms’ interest to meet compliance obligations. Platform councils could be established at different levels in different set-ups in order to iterate attempts to increase the legitimacy of platform rules and algorithmic communication governance orders.

- How global should platform councils be? Different types of social media councils (national, regional, and global) can be effective in addressing social media governance issues that vary in scope, context, and objectives. Multi-level collaboration and coordination among different levels of councils may be necessary to effectively address the complex and multifaceted challenges of social media governance.

- Should they be manned by experts or as broad as possible? Incorporating technical expertise into platform council decision-making is crucial, but requires balancing the need for pertinent information with the importance of ensuring the independence of the expert's perspective. Inclusivity and promoting marginalized and minority groups are important considerations in the design of social media councils.

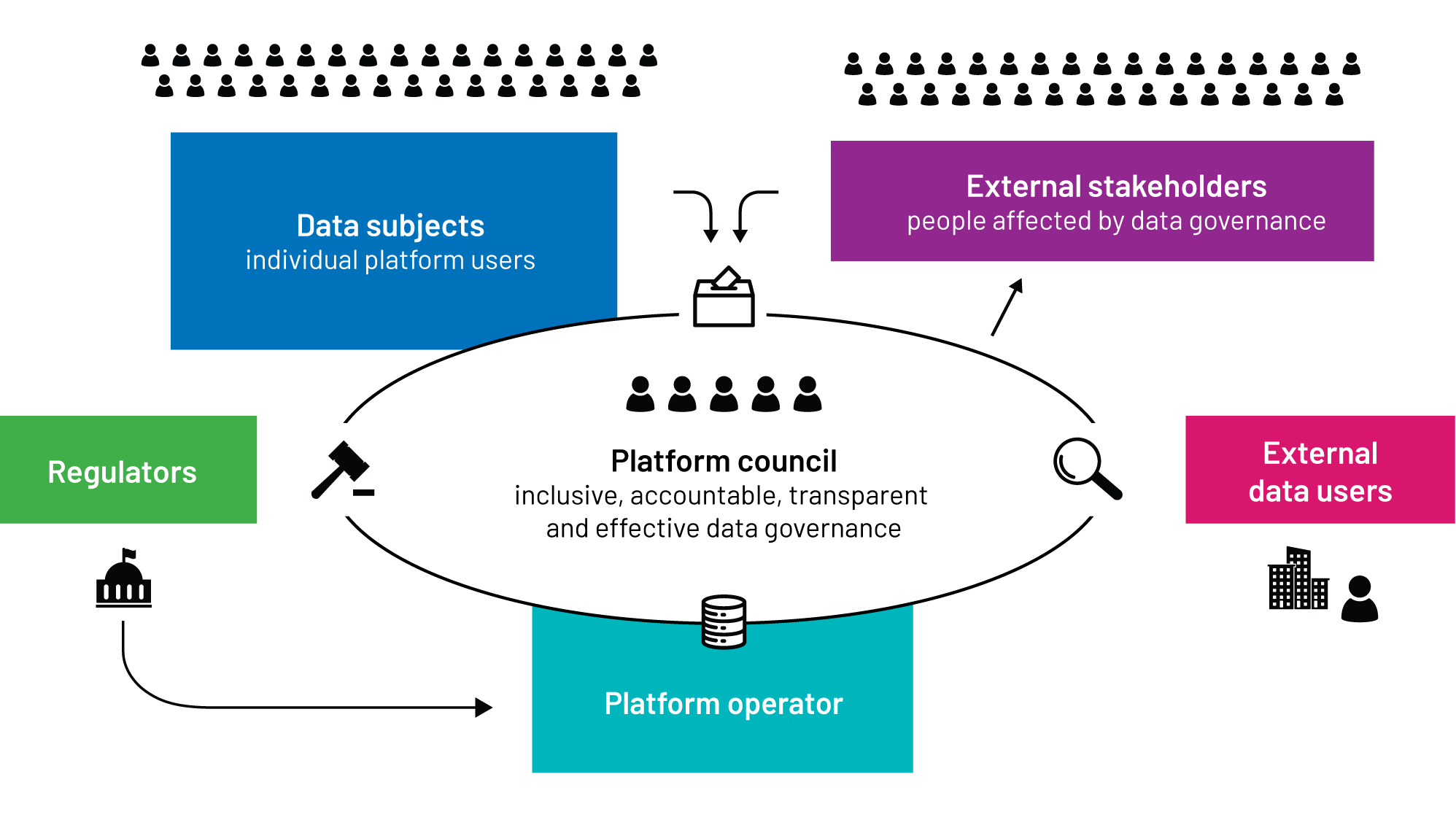

- Multistakeholderism as a key concept: To effectively address both global and local challenges and interests, SMC governance structures must be tailored to meet the needs of all, including non-users and non-onliners. This is where the concept of multistakeholderism comes into play. SMCs should have a diverse range of members, including users, experts, and citizens, and should be set up through a multi-stakeholder process that involves all relevant actors. Together with public consultation measures this ensures that the decisions made by the SMC are fair, transparent, and representative of all stakeholders' interests.

- Drawbacks and trade-offs: While SMCs can provide more legitimacy to the rules and algorithmic practices of platforms, there are also drawbacks and trade-offs to consider. These include a weakening of state regulators, confusion of responsibility, ethics-washing, a normative fig-leaf effect, and a globalist approach to speech rules that is non-responsive to local and regional practices. Additionally, incorporating too many non-experts into the assessment of rules and algorithmic practices can be problematic. The need for access to specific information about a platform's operations raises issues of confidentiality, which can limit the effectiveness of an SMC.

- Europe’s approach: The new European digital law packages include references to civil society integration into the national compliance structures of the Digital Services Act without specifying how exactly this process should be implemented. The German national implementation law includes a reference to a cross-platform social media council to be set up by parliament to advise the national Digital Services Coordinator.

- What way forward? Beyond national councils, there is strong case for a commission focused on ensuring human rights online can be modeled after the Venice Commission and can provide expertise and guidelines on policy questions related to platform governance, particularly those that affect public interests like special treatment for public figures, for mass media and algorithmic diversity. The commission can be staffed by a diverse set of experts from selected organizations and institutions established in the platform governance field.

Increasing the justice of hybrid speech orders

- “We are not a democracy”, the internal rules of AI-based image-generator, the research lab Midjourney, proclaim. “Behave respectfully or lose your rights to use the Service”. Indeed, companies are not democracies, they are not run by elected representatives and their business models are not voted on directly – only indirectly through the power of users, if the markets they are active in function well.

- And yet: the spaces of communication and the communicative infrastructures of democratic public spheres are subject to considerable processes of change. As the European Court of Human Rights noted in its 2015 Cengiz ruling, “the Internet has now become one of the principal means by which individuals exercise their right to freedom to receive and impart information and ideas, providing [...] essential tools for participation in activities and discussions concerning political issues and issues of general interest.” Basic questions of our society are being negotiated in spaces that are increasingly algorithmically optimized and normatively shaped by digital platforms.

- Socio-communicative spaces have not only been enriched by taking on a digital dimension, they have also changed in character: a majority of online communication takes place in privately owned and regulated communicative settings. The key questions regarding how to enable, moderate and regulate speech today have to be asked and answered with a view to private digital spaces. These changes in communicative spatiality take nothing away from the primary responsibility and ultimate obligation of states to protect human rights and fundamental freedoms, online just as offline. However, over the last decade, tension between the normativity inherent in the role of states and the facticity of online communicative practices that are being primarily regulated by the rules of private actors has increased in intensity.

- Traditional approaches to the involvement of citizens in the development of speech rules for online space do not work as tried and tested democratic principles cannot easily be translated to allow user participation in the design of private selection algorithms and moderation practices. Just as platforms themselves have become rule-makers, rule enforcers and judges of their decisions, politicians have recognized the importance of considering public contributions to private speech rules. In the German ruling coalition’s multi-party agreement, the government committed itself to “advancing the establishment of platform councils”. In a similar vein, the German Academies of Sciences and Humanities called for the participation of “representatives of governmental and civil society bodies as well as (...) users (...) in decisions about principles and procedures of content curation”.

- States – through laws and courts – have laid down ground rules for platforms to accept, for example in relation to how they treat users and how to handle illegal content. To a growing extent, therefore, private normative orders and state legal orders are interacting to create neither solely private nor public, but hybrid forms of governance of online communication. This makes eminent sense: the balancing of interests on platform-based online communication invokes issues of public interest, but is the result of the private product design of platform providers.

- Orders of power are either public or private or hybrid. Private orders – often called “Community Standards” –, based on contracts, are legitimate and often successful in regulating communication spaces. In the last years, private orders have been infused with public elements, as these communication spaces are increasingly carrying democratic discourse, thus creating a hybrid order of speech governance.

- Hybrid normative orders are characterized by both private and public features relating to ownership (often private), scale (of public impact), participants (both private and public, i.e. state, actors), and values (private goals, sometimes aligned with, but sometimes conflicting with public values). Within hybrid orders, difficult normative questions emerge as to the application of fundamental and human rights (Drittwirkung; horizontal application) and the role that third parties (non-users, the public, society) should play.• States have started to regulate private and hybrid communication spaces with a view to fulfilling their obligation regarding coordination and cooperation to ensure public values. At the same time, private actors have been asked to further legitimize their primacy over communication spaces. This is a challenge that the founder of the Leibniz Institute for Media Research | Hans-Bredow-Institute, the pioneer of German broadcasting, Hans Bredow, wrote about 75 years ago. He suggested the creation of a council to represent the population in broadcasting decisions. The challenge now was to learn from this approach in the digital age, and especially with regard to private and hybrid communication spaces.

- In this project, when we speak of “enhanced legitimacy” of norm-setting and norm-enforcement by platforms, we mean that elements are added that make an impact of private action on public communication appear (more) just. This can happen by making the norm-setting process itself more participatory, but also by tying it more back to human rights.

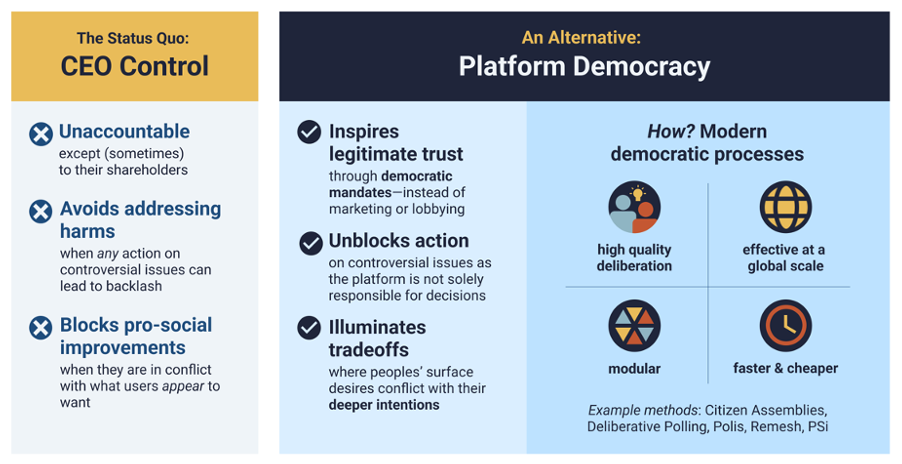

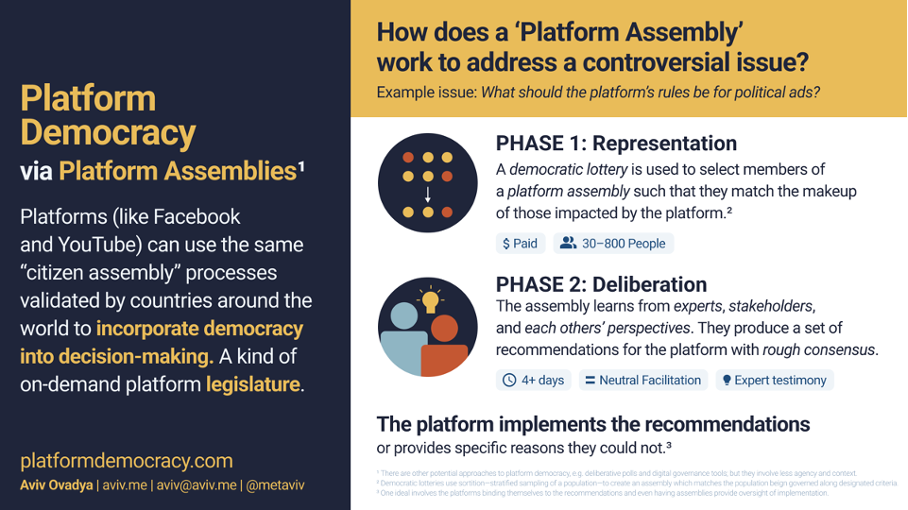

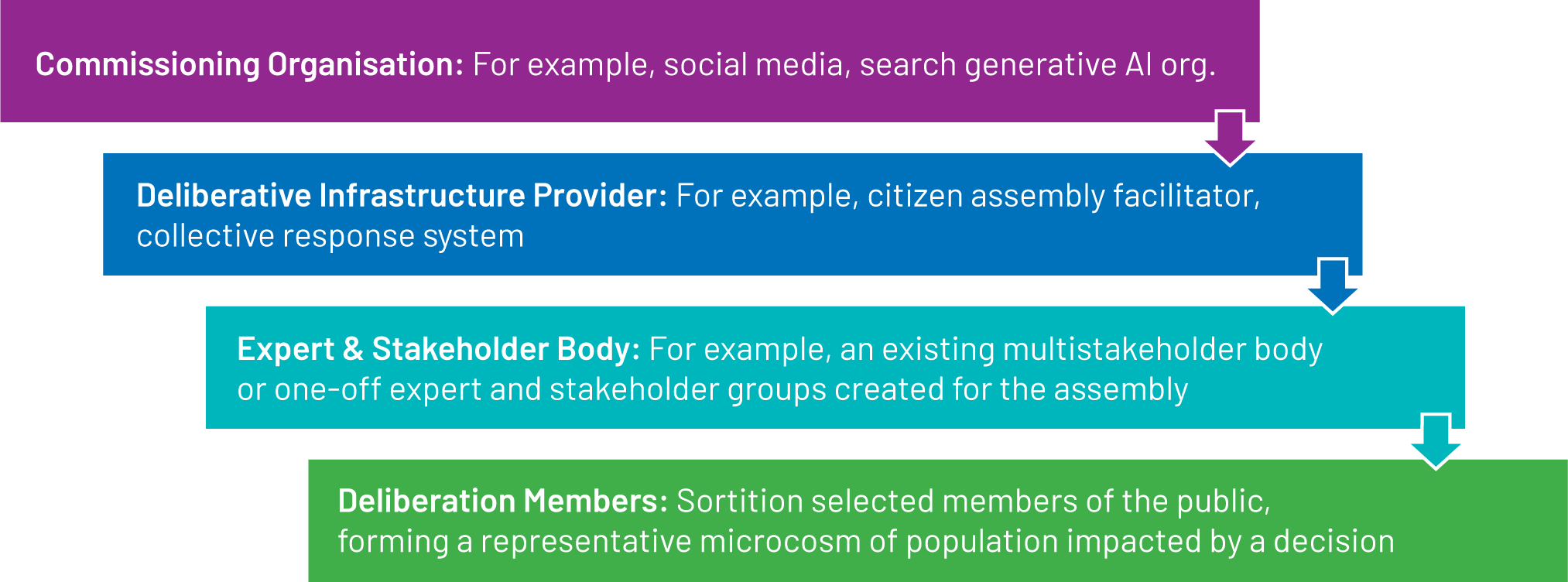

On the development of platform councils

- In 2021 we published an introductory study on Social Media Councils, exploring the concept and their origins in media councils. To build trust, platforms should try a little democracy suggested Casey Newton. ARTICLE 19 published a report on their Social Media Councils experiment in Ireland. Towards Platform Democracy: Policymaking Beyond Corporate CEOs and Partisan Pressure | Belfer Center for Science and International Affairs is Aviv Ovadya’s committed plea for platform democracy, through Citizen Assemblies. A useful taxonomy is the Content & Jurisdiction Program - Operational Approaches by the Internet & Jurisdiction Policy Network. In a recent paper Rachel Griffin points to the existence of two approaches to alleviate legitimacy deficits of platform decision-making, a “multistakeholderist response to increase civil society’s influence in platform governance through transparency, consultation and participation” and a “rule of law response” extending “the platform/state analogy to argue that platform governance should follow the same rule of law principles as public institutions”. Most recently the Behavioral Insights Team cooperated with Meta and organized a deliberative assembly for user integration into social media decision-making at scale. These more recent developments are illuminating in that they point to a widespread discontent with platform rule (and platform rules).

- A major social network has created an Oversight Board to help with content decisions and algorithmic recommendations. A gaming label is experimenting with player councils to help programmers make exciting choices. German public television's advisory council wants to create a people’s panel to ensure more input into programming decisions. The world’s largest online knowledge platform has, since its inception, let users (and user-editors) decide upon content-related conflicts. All of these examples share one fundamental goal: ensuring that decisions on communication rules, for people and/or mediated through algorithms, are better, more nuanced, and considered more legitimate through broader involvement.

- What is the solution to the challenges of socially reconnecting platform decisions and practices and public values? Do we need an independent and pluralistic body that has advisory or binding decision-making powers, consisting of representatives of users, or of governmental and civil society bodies, or a combination thereof? And if yes, at which level (platform-specific, national, regional, international)? Deliberative forums have been used in diffnerent policy settings for a long time. Groups of randomly selected individuals are tasked with addressing a particular issue or question of public concern. Similar councils, usually expert-led or on the basis of multistakeholder representation, have existed for traditional media, especially public service media. However, platform councils – councils advising social media platforms – are a more recent phenomenon.

- The Oversight Board is a global, currently platform-specific, quasi-judicial and advisory platform council. The board is staffed with representatives from academia and civil society who, on the basis of Meta’s community standards and international human rights standards, assess Meta's decisions on the deletion of user-generated content on its Facebook and Instagram platforms.

- The Oversight Board may be seen as the first attempt to partially open up the decision-making system of a commercial platform to the “outside”; but to form and position this “outside” in a way that not only promises systemic improvements for users, but actually triggers them beyond the individual cases remains a challenge. The Councils of other companies, including TikTok (which set up several regional “councils”) and Twitter (which disbanded its Trust and Safety Council after changes in ownership), have not been institutionally strong enough to confirm a global trend. It is therefore upon scholars to develop and provide innovative concepts for a democracy-friendly design of platform councils and to innovate participatory ideas.

The role of platform councils

- The key added value of Social Media Councils is to provide more legitimacy to the rules and algorithmic practices of platforms. Any form-related decision has to follow this function.

- Our research has confirmed that no single solution to recoupling online spaces to public values exists. Indeed key questions that have to be asked and answered in the development of social media councils relate to:

- the relationship of social media councils to the platform and modes of enforcement of its decisions,

- the geographical scope of SMCs: local, national, regional, global,

- the scope of SMCs: industry-wide or specific for each platform,

- the set-up of SMCs: by a platform? through a multi-stakeholder process? by states? as a self-regulatory approach?

- its members/involvement: who constitutes the SMC? Users? Experts? Citizens?

- its focus: individual content regulation decisions, the design of algorithms? General human rights policy?

- procedure: how are decisions arrived at?

- funding: how to guarantee independence?

- risks: how to ensure that SMCs are not coopted by bad actors?

- culturally and geographically contingent factors: Which public values are relevant in the context of hybrid platform governance? Which conditions need to be met for the SMCs to be accepted in regional/local contexts?

- Drawbacks exist. They include a weakening of state regulators, confusion of responsibility, ethics-washing, a normative fig-leaf effect, and a globalist approach to speech rules that is non-responsive to local and regional practices. There is a lack of a singular approach to improving the legitimacy of platform rules and their algorithmic governance of information and communication flows.

- Internal SMCs are implemented by platforms on their own terms to strengthen their internal processes with specific procedural safeguards. The Meta Oversight Board is an example of such an internal SMC. By contrast, external SMCs are introduced externally and can be either voluntary or obligatory. The broader a membership of Social Media Council is, the more legitimate its decisions might appear to be. However, there are substantial trade-offs to consider when incorporating too many non-experts into the assessment of rules and algorithmic practices. The need for access to specific information about a platform's operations raises issues of confidentiality.

- The new European digital law packages include references to civil society integration into the national compliance structures of the Digital Services Act without specifying how exactly this process should be implemented.

- The German national implementation law includes a reference to a cross-platform social media council to be set up by parliament to advise the national Digital Services Coordinator.

The potential impact of platform councils

- While the basic conception of platform councils – more inclusivity in decision-making and communication space-as-product design – is relatively clear, there is limited evidence, based on the studies contributed by fellows within the project, how to best construct a platform council.

- The most effective approach seems to be one of regulated self-regulation which combines commitments by states to develop a normative framework and engages platforms’ interest to meet compliance obligations. Platform councils could be established at different levels in different set-ups in order to iterate attempts to increase the legitimacy of platform rules and algorithmic recommender orders. The impact of the various approaches should then be compared and contrasted and the most effective approach should be baselined for future implementation.

- A key concept to serve as guide in the development of social media councils is that of multistakeholderism. To effectively address both global and local challenges and interests, SMC governance structures must be tailored to meet the needs of all, including non-users and non-onliners.

The design of platform councils

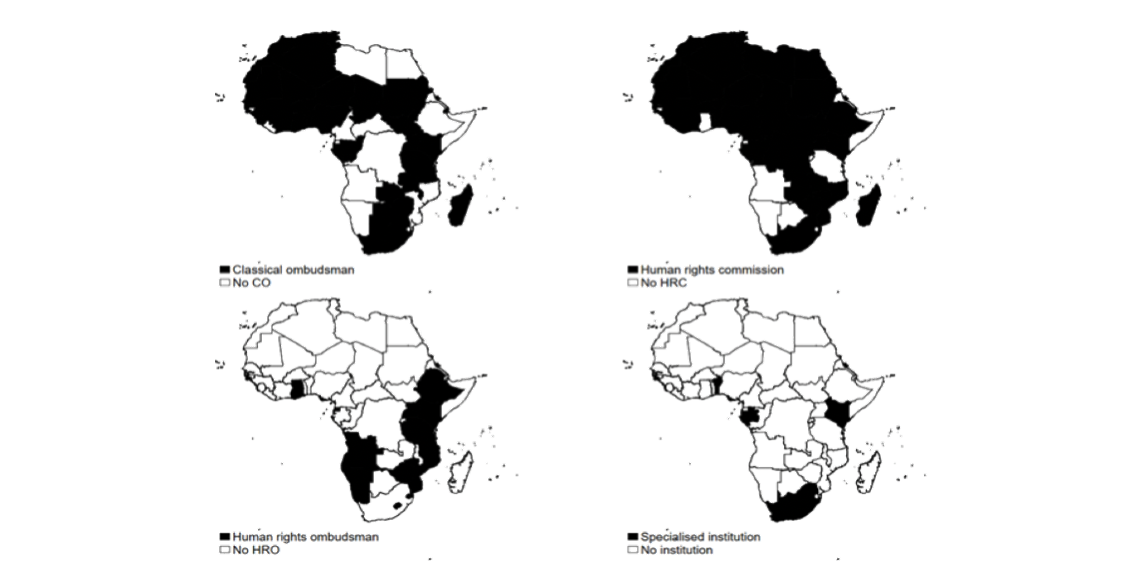

- To ensure responsiveness to citizens' concerns resulting from participation, channels must exist for citizens' concerns to be heard, and threats to human rights must be considered. Machine-learning tools can be used to identify recurring speech conflicts. National social media councils may be more effective in addressing specific issues that are unique to a particular country, such as local laws and regulations, cultural sensitivities, and political contexts. They may also be more accessible to marginalized groups within that country who may not have the resources to participate in regional or global councils.

- Regional social media councils may be useful in addressing issues that affect multiple countries within a region, such as language barriers, regional political and cultural contexts, and shared challenges. Regional councils may also provide a platform for regional coordination and collaboration on issues related to social media governance.

- Global social media councils may be necessary to address issues that transcend national or regional boundaries, such as cross-border hate speech, disinformation campaigns, and the influence of global social media platforms on local cultures and politics. A global council may also provide a platform for global coordination and collaboration on social media governance issues. Ultimately, the decision of whether to establish a national, regional, or global social media council should depend on the specific context and objectives of the council. A multi-level approach that involves collaboration and coordination among different levels of councils may also be necessary to effectively address the complex and multifaceted challenges of social media governance. We can learn from broadcasting councils which partially perpetuate prevailing power dynamics in society, with underrepresentation of socially marginalized groups such as the poor, disabled people, religious minorities, PoC, LGBTIQ*, and a relatively high average age of members. Furthermore, men outnumber women and people of other genders in these councils.

- Expertise matters: Incorporating technical expertise into platform council decision-making requires balancing the need for pertinent information, potentially from someone with ties to the online service being evaluated, with the importance of ensuring the independence of the expert's perspective.

- To address these gaps, a multistakeholder community is required, which brings together stakeholders from various sectors to fill the many research and resource gaps. If Social Media Councils are not properly empowered, they may end up being composed of non-diverse individuals who provide non-binding suggestions to the predominant companies on how they can superficially conform to human rights constraints. However, with adequate resources and authority, and a focus on inclusivity and promoting marginalized and minority groups, they have the potential to compel platforms and regulators to address systemic inequalities, create safer and more welcoming social media environments, and establish more egalitarian forms of social media governance.

The way forward

- This project has focused on investigating the potential of platform councils as instruments and forums for more digital democracy. Especially when social media councils are established with the goal of enabling an appropriate assessment of individual pieces of contents, the construction runs into representation problems that are almost impossible to solve, because societies have become so complex that it is hopeless to include all cultural, social and other perspectives in a single body. This is already a problem with national broadcasting councils, since there are hardly any associations left that represent large parts of society. Much attention was paid to inclusivity in the design of the Meta Oversight Board, but it is still criticized in this respect.

- Meta has introduced a novel approach for decision-making in the development of apps and technologies known as the Community Forum. The concept of community forums and online citizen assemblies could be extended to the metaverse. This creates new opportunities for participatory democracy and deliberative decision-making processes. The best way forward is to integrate the interests of diverse stakeholders, iterate models of social media councils and to innovate new models of recoupling public interest and private orders in hybrid communication spaces. There can be value in not waiting for the perfect solution of one council but to try out various models in parallel, creating a regulatory system in which these observe each other and hold each other to account.

- Against this background, we want to propose an actor that can solve a specific problem for which no convincing solution exists so far. This is not a matter of assessing individual decisions on content, but rather of the level of rulemaking by platforms, and there specifically of rules that affect public interests in a specific way and are therefore in particular need of legitimation in the sense mentioned above. Building on substantial previous work, we propose to establish a commission dedicated to furthering the application of rule of law standards, of democratic values and of human rights on the Internet, in particular within platforms.

- The commission is focused on ensuring human rights online. It can be modeled after the European Commission for Democracy through Law (“Venice Commission”), an independent consultative body within the Council of Europe systems that provides expertise and conducts on issues of constitutional law and democratic institutions with a focus on good practices and minimum standards.

- Its main task is to focus on policy questions, not on individual pieces of content, and on public interest questions. These include, particularly, exceptions for public figures, inauthentic behaviour, rules for media content and algorithmic recommendations in light of content diversity. These areas are clearly not to be regulated by platforms alone, but should neither be regulated through state rules.

- The Commission can build on, and be staffed by, a diverse set of experts from selected organizations and institutions established in the platform governance field, including research centers and networks of experts.

We gratefully acknowledge the contributions by Martin Fertmann, Josefa Francke, Christina Dinar, Lena Hinrichs and Tobias Mast.